A team of two Canadian researchers is creating fabrics able to change color and shape. Concordia University associate professor Joanna Berzowska and École Polytechnique de Montréal professor Maksim Skorobogatiy, developed different types of smart textiles with technology woven into the fiber and have created prototype garments able to change shape and color. One of their prototype garments is constructed with a pleated structure into which photonic band-gap fibers are woven. Custom electronics control how these fibers are lit, which creates different patterns and textures. The technology could also potentially capture energy from human movement that could, for example, charge a mobile telephone. (EurekAlert)(Concordia University)

Google Search

Friday, May 24, 2013

Taiwan Investigates Samsung Practices

Taiwanese fair-trade officials have reportedly launched an investigation into Samsung’s business practices after allegations surfaced that the South Korean technology company paid individuals to submit critical reviews of products by Taiwanese rival HTC. The company reportedly hired students to post unfavorable reviews of HTC phones and to suggest that consumers purchase unlocked Samsung handsets instead. Samsung hasn't formally responded to the charges. However, Samsung’s Taiwan Facebook page reportedly said that the company regretted any confusion and inconvenience its Internet marketing may have caused and that it “has halted all Internet marketing such as posting articles on websites.” If found guilty, Samsung could be fined up to 25 million Taiwanese dollars (about $840,000 at press time). (BBC)(InformationWeek)(TechCrunch)(AFP)

Wednesday, May 22, 2013

Security-Application Update Disables Computers Worldwide

A faulty update from security vendor Malwarebytes issued Tuesday afternoon reportedly left users worldwide without computer access after the software disabled essential, legitimate Windows components after identifying them as malware. The problem was created by a faulty update definition that marked Windows.dll and .exe files as malware. Malwarebytes said it took the update off its servers as soon as it realized there was a problem, which occurred within eight minutes of deployment. The company said in a blog post that it is re-evaluating its update policy to prevent this from occurring again. The ongoing fight against new and fast moving cyberthreats and the need to update applications makes faulty updates a “constant danger,”, said Rik Ferguson, global vice president of security research at security vendor Trend Micro. (SlashDot)(V3.co.uk)(Malwarebytes)

Market Research Firm Blames Windows 8 for PC Sales Drop

Analysts with IDC, a market research firm, say Windows 8 is responsible for the recent significant drop in PC sales by confusing consumers. The new operating system “not only failed to provide a positive boost to the PC market, but appears to have slowed the market,” according to a statement from Bob O’Donnell, IDC Program VP Clients and Displays. According to IDC, global PC shipments dipped 13.9 percent through the first three months of 2013, compared with the same time period last year. This is the largest drop since the firm began tracking quarterly desktop-computer sales in 1994. O’Donnell added that, although some consumers seem to appreciate the new capabilities, “the radical changes to the [user interface], removal of the familiar Start button, and the costs associated with touch have made PCs a less attractive alternative to dedicated tablets and other competitive devices. Analysts originally forecast first quarter 2013 PC sales would dip 7.7 percent. This is the fourth consecutive quarter of year-over-year shipment declines. (SlashDot)(ABC News)(ZDNet)(Mashable)

Tuesday, May 21, 2013

New Techniques Perform 3D Modeling of the Human Heart

A team of University of Minnesota surgeons and biomedical engineers are using new technologies to create a digital library of human heart specimens and enable 3D computer modeling and mapping of hearts. This capability could let researchers see the structure and function of cardiac tissue, enabling them to better understand variations in the heart and how it changes in the presence of disease. It could also aid in the design of new cardiac devices. The University of Minnesota techniques use contrast-computed tomography, which uses dyes in the imaging process to allow the blood vessels and other structures to be better seen. The researchers are using human heart specimens from organ donors that have been found not to be usable for transplant. They published their work as a Journal of Visualized Experiments video article. (EurekAlert)(http://www.eurekalert.org/pub_releases/2013-04/tjov-sst041613.php)

Monday, May 20, 2013

WordPress Botnet Continues Growing

A recent series of attacks against WordPress blogs is creating a growing botnet, according to security researchers. The attacks—which focus on individuals whose WordPress username is “admin”—attempts to crack their password for signing into the blog using brute-force attacks. The botnet reportedly now consists of 90,000 or more computers. Security experts are concerned the botnet could continue growing and create a massive problem. The attacks reportedly started after WordPress began offering an optional two-step authentication login. Once a website is infected, it is equipped with a backdoor. This lets the hackers control the site remotely and make it part of the botnet. (BBC)(Matt Mullenweg)(Krebs on Security)

Sunday, May 19, 2013

[Conference News] “The Good, the Bad, and the Ugly” in Face-Recognition Systems

Face recognition is an active area of computer-vision and pattern-recognition research. The Good, the Bad, and the Ugly (GBU) Challenge Problem is a recent effort to build on earlier successful evaluations of face-recognition systems relative to illumination, pose, expression, and age. GBU focuses on “hard” aspects of face recognition from still frontal image pairs that aren’t acquired under studio-like controlled conditions. The image pairs are partitioned into the good (easy to match), the bad (average matching difficulty), and the ugly (difficult to match).

In a paper presented at the 2012 IEEE Workshop on the Applications of Computer Vision (WACV 2012), researchers from the University of Notre Dame investigate image and facial characteristics that can account for the observed significant differences in performance across these three partitions. Their analysis indicates that the differences reflect simple but often ignored factors such as image sharpness, hue, saturation, and extent of facial expressions.

“Predicting Good, Bad and Ugly Match Pairs” and other papers from WACV 2012 are available to both IEEE Computer Society members and paid subscribers via the Computer Society Digital Library.

Saturday, May 18, 2013

Pending US Immigration Legislation Would Increase Visas for Technology Workers

A comprehensive proposed US immigration bill would raise the ceiling for H-1B visas, used in part to let domestic companies hire technology professionals from other countries. The Border Security, Economic Opportunity, and Immigration Modernization Act of 2013 would raise the ceiling from 65,000 to 110,000 and eventually perhaps 180,000. In addition, the bill would require the US Labor Department to create a website to which employers must post job openings at least 30 calendar days before hiring an H-1B applicant for the position. This is designed to make sure companies try to fill openings with US citizens or legal residents first. In the past, H-1B visas have been controversial. US technology firms say the limit should be raised so that they can hire the best available employees to fill openings for which they can’t find domestic workers. Organizations representing US engineers have contended that companies want to hire lower-paid workers from outside the country. The Border Security, Economic Opportunity, and Immigration Modernization Act of 2013 would also exempt people with doctorates in science, technology, engineering, and mathematics from employment-based permanent-resident visa limits, enabling more of them to live and work in the US. Supporters say they want to see the legislation passed by June of this year. (SlashDot)(Computerworld)(CBSNews)

Friday, May 17, 2013

UK Spectrum Auction Probed

The UK’s National Audit Office is investigating the June 2012 auction of 4G wireless spectrum by British telecom regulator Ofcom to determine whether it was handled in a way that would yield a fair amount of revenue for the government. The auction generated £2.3 billion (about $3.54 billion at press time) rather than the anticipated £3.5 billion (about $5.38 billion). The UK government had factored the expected earnings into its national budget, so the shortfall could cause fiscal problems. Some industry observers say that had there been more aggressive bidding, the auction could have yielded as much as £4 billion (about $6.15 billion). (SlashDot)(TechWeek Europe)(The Guardian)

Thursday, May 16, 2013

Researchers Create Powerful Microbatteries

A University of Illinois at Urbana-Champaign research team has successfully developed new microbatteries that are reportedly the most powerful ever documented. A microbattery is a solid state electrochemical miniaturized power source that could be used in small items such as medical devices or RFID tags. This new technology could be used to create new compact radio-communications and electronics applications such as lasers, sensors, and medical devices. The millimeter-sized batteries provide both high power and high energy, where, with conventional battery technologies, there is a tradeoff between the two. Typically, capacitors release energy very quickly but can only store a small amount of energy while fuel cells and batteries are able to store a great deal of energy, but release or recharge slowly. These high-performance batteries contain a fast-charging cathode with an equally high-performance, microscale anode. Researchers say they can tune the battery such that it has the optimal power and energy capabilities for the specific application. The new technology could be used in transmitters able to broadcast radio signals able 30 times farther than conventional technology, the researchers said. These small batteries could also reportedly recharge 1000 times faster than conventional technologies, they added. The scientists are now working on lowering their batteries’ cost and integrating them with other electronics components. They published their results in the journal Nature Communications. (EurekAlert)(University of Illinois at Urbana-Champaign)

Wednesday, May 15, 2013

UCLA Researchers Make New Material for High-Performance Supercapacitors

University of California, Los Angeles scientists have created a material they say could be used to create powerful supercapacitors. The material, a synthesized form of niobium oxide, could be used to rapidly store and release energy. The technology could be used to rapidly charge many devices, including mobile electronics and industrial equipment. The scientists have developed electrodes using the material, but must undertake more research to create entire quick-charging devices. Cornell University and the Université Paul Sabatier researchers contributed to the work, which was published in the journal Nature Materials. (EurekAlert)(University of California Los Angeles)(Nature Materials)

Tuesday, May 14, 2013

Google Proposes Concessions in EU Antitrust Case

Google formally submitted a concession package to European Union regulators in hopes of ultimately settling antitrust allegations without incurring either formal charges or a fine. These concessions have not been made public, but industry observers say the Internet search giant has proposed labeling its own services in search results, such as results from YouTube, and easing restrictions on advertisers by allowing them to export analytical data and permitting them to move to competitors’ services. These concessions will reportedly be the first time Google has responded to any type of regulatory pressure. The EU has been investigating various complaints against Google for its business practices, such as allegedly manipulating search results, since 2010. (Reuters)(Mail Online)

Monday, May 13, 2013

Researchers: Wireless-Cloud Energy Consumption and CO2 Emissions Will Be Huge

Centre for Energy Efficient Telecommunications researchers have forecast that global wireless-cloud access will generate as much carbon dioxide as 4.9 million cars by 2015. CEET estimates that Wi-Fi, 3G, and long-term evolution (LTE) services will use up to 43 terawatt-hours of energy in 2015, compared to just 9.2 TWh in 2012. This is an increase of 367 percent and is based on estimates that cloud users will transfer 23 exabytes (1018 bytes) of data per month by 2015. The Australia-based CEET is a partnership between the University of Melbourne, Alcatel-Lucent, Bell Labs, and the Victorian state government. (ZDNet)(Centre for Energy Efficient Telecommunications)

Important Challenges Face New FCC Head

When US Federal Communications Commission chair Julius Genachowski leaves his post “in the coming weeks,” there will likely be a heap of expectations facing his successor. President Barack Obama has yet to name a replacement and no firm date for Genachowski’s last day at the agency has been released, but Capitol Hill and industry pundits are rife with opinions as to what direction the agency head should go. For example, Phil Weiser, dean of the University of Colorado law school and a former senior presidential adviser, claims this could be a time to redefine the FCC’s role, which could include improving its enforcement capabilities and allow it to be more responsive to emerging issues through self-regulation. He told the Washington Post that one of the main priorities will be “freeing up wireless spectrum not only for consumers but also for machine-to-machine communications.” He added, “A core challenge for the FCC and the government is to create more access to spectrum, which will enable more entrepreneurs, companies, and individuals to use it in interesting ways. In addition to freeing up licensed spectrum, the government could also make available additional unlicensed spectrum.” The leading candidates for the FCC post are reportedly Tom Wheeler, a venture capitalist who has led wireless and the cable trade groups; and Jessica Rosenworcel, an FCC commissioner backed by US Senate Commerce Committee Chair Jay Rockefeller and 37 other senators. (The Washington Post)(Reuters)

Sunday, May 12, 2013

Popular Android Applications Contain Security Flaws

Researchers from the University of California, Davis, discovered security flaws in roughly 120,000 free applications for the Android smartphone, including several popular texting, messaging, and microblogging programs. These vulnerabilities could be exploited by malware that could then allow the hackers to access users’ private information or post fraudulent messages using social media. The UC Davis researchers found developers of these applications didn’t secure parts of the code. In the WeChat service, for example, they were able to malicious code to turn off the WeChat background service such that a user would think the service is continuing to work when it is not. They have notified the developers concerning the flaws they found.(EurekAlert)(University of California Davis)

Friday, May 10, 2013

Coral-Repairing Robots Heal Damaged Reefs

Scientists from the Herriot-Watt University’s Centre for Marine Biodiversity and Biotechnology are developing underwater robots able to repair coral reefs. They have, to date, built prototype coralbots with an onboard camera, computer, and flexible arms and grippers that let it reattach healthy pieces of coral to a reef. Typically, scuba divers have to undertake this process. However, they often cannot work on deep reefs. The researchers’ long-term goal is creating a swarm of eight robots capable of autonomously navigating and working on reefs throughout the world. To this end, they are refining aspects of the robot, including its computer-vision system and arm. They launched a Kickstarter crowdfunding campaign in hopes of raising $107,000 to create two robots that will be publicly demonstrated on a coral reef in a public aquarium. (SlashDot)(Gizmag)

Thursday, May 9, 2013

[Conference News] Artifact Cloning in Industrial Software Product Lines

Many companies develop software product lines by cloning and adapting artifacts of existing variants, but their development practices in these processes haven’t been systematically studied. This information vacuum threatens the approach’s validity and applicability and impedes process improvements.

An international group of industry and academic researchers presented a paper presented at the 2013 17th European Conference on Software Maintenance and Reengineering (CSMR 2013) that characterized the cloning culture in six industrial software product lines realized via code cloning. The paper describes the processes used, as well as their advantages and disadvantages. The authors further outline issues preventing the adoption of systematic software-reuse approaches and identify future research directions.

“An Exploratory Study of Cloning in Industrial Software Product Lines” and other papers from CSMR 2013 are available to both IEEE Computer Society members and paid subscribers via the Computer Society Digital Library.

Sensitive Tactile Sensor Lets Robotics Work with Fragile Items

Harvard School of Engineering and Applied Sciences researchers in the Harvard Biorobotics Laboratory have developed an inexpensive tactile sensor for robotic hands that is sensitive enough to enable a robot to gently manipulate fragile items. They designed their TakkTile sensor primarily for users such as commercial inventors, teachers, and robotics enthusiasts. They made the sensor with an air-pressure-sensing barometer, commonly found in cellular phones and GPS units in which it takes altitude measurements. This enables it to detect a very slight touch. The sensor would let a mechanical hand recognize that it is touching a fragile item and enable it to, for example, pick up a balloon without popping it. The researchers say TakkTile could also be used for devices such as toys or surgical equipment. Harvard University officials say the university plans to license the technology. (EurekAlert)(Harvard University)

Wednesday, May 8, 2013

Microsoft Inks Deal with Hardware Maker over Alleged Misuse of Intellectual Property

Microsoft has reached an agreement in which Hon Hai, the world’s biggest consumer electronics manufacturer, will pay Microsoft patent royalties related to devices powered by Google’s Android and Chrome operating systems. The deal protects Hon Hai, parent company of manufacturer Foxconn Electronics, from being sued by Microsoft, which contends the Google code in the devices uses Microsoft’s intellectual property. This is Microsoft’s nineteenth announced Google-related patent license deal—which includes those with companies such as Acer, HTC, Nikon, and ViewSonic—since 2010. Rather than sue Google, Microsoft has sought royalties from hardware makers using Google’s software in their products. (BBC)(CNET)

Tuesday, May 7, 2013

Dish Network Bids $25.5 Billion for Sprint

Satellite TV provider Dish Network has submitted an informal $25.5 billion bid for Sprint Nextel, upping a previous offer from Japanese telecommunications company SoftBank. Dish has offered Sprint shareholders $4.76 in cash and roughly $2.24 in stock that would be financed through $17.3 billion in cash and debt financing. SoftBank offered $20.1 billion in October 2012. Sprint—the third-biggest US cellular provider with 56 million subscribers—has yet to comment on the Dish proposal. Sprint is currently the No. 3 cellphone service provider in the United States with 56 million subscribers nationwide. (ZDNet)(CNNMoney)(The New York Times)(Dish)

Monday, May 6, 2013

Is IPv6 Secure Enough?

by George Lawton

Proponents are pushing network operators and equipment makers to adopt IPv6.

Supporters say increased utilization will result in a better protocol that provides many more IP addresses for the huge number of Internet-connected devices than its predecessor, IPv4. The Internet Assigned Numbers Authority gave the last IPv4 addresses to regional Internet registries in 2011.

On 6 June this year, backers sponsored World IPv6 Launch day, during which participating websites enabled the protocol permanently. In addition, ISPs offered IPv6 connectivity and router manufacturers provided devices enabled for the technology by default.

Despite the ongoing campaign, numerous experts contend that IPv6 raises significant security concerns that adopters must address.

For example, they say, best security practices for IPv6 routers, firewalls, and spam filters have not been well developed and implemented.

There are also concerns that Windows machines now turn on IPv6 tunneling by default. With this approach, legacy IPv4 networks can carry IPv6 traffic by encapsulating and tunneling IPv6 packets across IPv4 networks.

However, this could create security problems for organizations that have such IPv4 networks but haven't deployed security measures to deal with malicious IPv6 packets.

Jeremy Duncan, senior director at security vendor Salient Federal Solutions, said there have already been several IPv6 denial-of-service (DoS) and spam attacks because many existing routers, firewalls, and other gateway devices can't protect against them yet.

"There is a small percentage of the attacker community that is knowledgeable about IPv6," said IPv6 security expert Scott Hogg, director of technology solutions at consultancy GTRI and chair of the Rocky Mountain IPv6 Task Force.

Some hackers, he added, don't even know about IPv6 vulnerabilities but launch general attacks that happen to exploit IPv6 networks' weaknesses.

The Internet Engineering Task Force began developing IPv6 in 1992 when the IETF saw that the increase in Internet activity would use up the limited number of IPv4 addresses. The group released IPv6 in 1996.

IPv4 uses a 32-bit address space, allowing for 232 — or about 4.3 billion — unique addresses.

IPv6 uses a 128-bit address space, allowing for 2128 — or about 3.4×1038 — addresses.

Google has collected statistics that indicate that IPv6 global aggregate usage has grown from 0.2 percent of all Internet traffic in early 2010 to 0.75 percent in mid-2012.

Newer operating systems and networking equipment support IPv6. However, many older IPv4 devices are still in use.

According to GTRI's Hogg, a key issue is the lack of time IT workers have spent learning about IPv6, even though their networks use the technology.

IPv6 has different security challenges than IPv4, he explained. "Most security practitioners have not invested the time to learn about these differences and formulate plans on how to secure IPv6," he said.

IPv6 code development for security is immature, according to Jeff Doyle, president of IP-network consultancy Jeff Doyle and Associates.

Vendors have just begun implementing and testing useful IPv6 security approaches, which are too new to have been proven safe, he explained.

One problem occurs because IPv6 networks create tunnels for sending traffic across IPv4 networks by encapsulating IPv6 data into IPv4 packets.

IPv4 equipment, including firewalls, cannot easily decode the traffic based on the newer protocol for security inspection.

Thus, hackers could send malware and spam that IPv4 security equipment couldn't detect.

Some older IPv6 implementations don't support newer security technologies, including those that provide built-in authentication and encryption.

Another problem is the IPv6-attack tools that people have created and posted online for use by unskilled hackers.

For example, said Salient Federal's Duncan, one prominent group — the Hackers Choice (THC) — has updated one of its tools to include exploits for LAN-based IPv6 equipment.

THC says it has done this to make public the vulnerabilities it finds so that people will fix them.

However, the toolkit also lets hackers fake router advertisements, which routers use to announce themselves on a link. Hackers could use fake RAs to overwhelm a router and thereby stall traffic.

IPv6 offers rich extension headers that carry information that promises more granular networking control in areas such as routing, data encryption, and authentication.

However, vendors are just learning how to securely support these extensions.

In one case, a researcher used an extra-long extension header to overwhelm a router, allowing potentially malicious packets through without authentication.

Older IPv6 equipment supported by default the protocol's Type 0 routing headers, designed to list the intermediate nodes at which packets will stop on the way to their destination. This is designed to improve network performance.

However, hackers could construct packets that use the Type 0 headers to travel between two routers multiple times, resulting in a DoS attack.

Newer IPv6 equipment has support for Type 0 routing headers turned off by default.

IPv6 has several security features such as IPsec, which authenticates and encrypts each IP packet used during communications.

However, Salient Federal's Duncan noted, older equipment doesn't always have IPsec turned on by default.

IEEE 802.1X provides access control via the authentication of routers trying to communicate with the network.

The IETF's IPv6 Router Advertisement Guard (RA-Guard) analyzes RAs and filters out bogus ones sent from unauthorized routers. This helps counter router spoofing.

However, Windows doesn't natively support these capabilities, so organizations must deploy RA-Guard drivers on each of their computers to protect them.

The best practices for addressing IPv6 security issues are generally the same as those used with IPv4, said GTRI's Hogg.

However, in many cases, organizations must update their networking equipment to support the latest IPv6 capabilities, said consultant Doyle.

This will entail a simple software upgrade in some cases or, for equipment using dedicated-purpose chips that can't be upgraded, a full platform change.

Moreover, Doyle said, companies must make sure their IT personnel are fully trained in IPv6 security.

Businesses could also use deep-packet inspection tools to analyze IPv6 traffic more carefully.

Some organizations are offering security bounties to help find vulnerabilities. Will Brown, associate vice president of product development for network-equipment vendor D-Link, said, "We are working directly with the security community … and have created a reward program for disclosing any issues that can be verified."

Hogg stated, "We need security vendors to address IPv6 in all aspects of their security products to provide defenders [with] protection before they deploy IPv6."

Doyle predicted IPv6 will be a major concern to IT organizations and vendors for the next couple of years, as new vulnerabilities are discovered and addressed.

But in the long run, he said, as firewalls, spam filters, and packet-inspection tools improve, securing IPv6 will become routine.

Sunday, May 5, 2013

Businesses Turn to Object Storage to Handle Growing Amounts of Data

by Sixto Ortiz Jr.

Big Data has gotten so big that traditional, hierarchical file systems are straining to keep up with today's exponential information growth.

As businesses collect more and more information — particularly unstructured data such as multimedia files — administrators are having trouble managing, indexing, accessing, and securing the material.

The challenge with traditional file systems is maintaining their hierarchical organization and central data indices as the number of files and the amount of unstructured information grows.

In response, companies are turning to object storage, which stores data as variable-size objects rather than fixed-sized blocks.

Rather than housing information that can only be found somewhere in a hierarchical system, object storage uses unique identifier addresses to locate and identify data objects, explained Russ Kennedy, vice president for product strategy, marketing, and customer relations at object-storage vendor Cleversafe.

Object stores have nonhierarchical, near-infinite address spaces, said Mike Matchett, a senior analyst with the Taneja Group, a market-research firm.

Thus, even as the amount of data grows, the storing and finding of information doesn't become more complicated.

Nonetheless, widespread object-storage use faces several challenges.

Traditional storage systems house data in fixed-size blocks in directories, folders, and files. There is a limit to how many files can be housed in this hierarchical system, said Jeff Lundberg, Hitachi Data Systems' senior product marketing manager for file, content, and cloud.

Users can't go directly to information but instead must work via a central index, noted Janae Stow Lee, senior vice president of the File System And Archive Product Group at storage vendor Quantum Corp.

A complicating factor is that because of the increase in multimedia, Kennedy noted, file sizes are growing to the gigabyte and even terabyte range.

As the amount of data and number of files have grown, current storage systems have become very large, explained Tom Leyden, director of alliances and marketing for object-storage vendor Amplidata. This makes finding information in their huge hierarchies increasingly difficult, he said.

The difficulty of trying to find data via increasingly large indices limits the number of files and amount of data traditional storage systems can work with, said the Taneja Group's Matchett.

And as traditional systems store more data, they become more likely to experience mechanical drive failure. Administrators then must copy data to additional systems to guarantee reliability and availability, which could be cost prohibitive for some organizations.

Added Quantum's Stow Lee, as information volumes grow, traditional file systems' data-replication approaches become too expensive and time-consuming to use.

Data backups also become costly, which could create serious problems for organizations that need timely recovery points, noted Tad Hunt, chief technology officer of storage vendor Exablox.

Many companies are using storage-area networks and network-attached storage to cope with spiking data volumes, but these approaches typically use hierarchical file systems and thus are also beginning to experience problems, noted Ross Turk, vice president of community at storage consultancy Inktank.

Work on object-storage technology began in 1994 at Carnegie Mellon University and has been supported over the years by the National Storage Industry Consortium and the Storage Networking Industry Association.

However, there was no big need for object storage until recently.

Numerous vendors — such as Amplidata, Caringo, Cleversafe, DataDirect Networks, Exablox, and Quantum — are now developing and selling object-storage products.

Searching for specific content in a large traditional file hierarchy requires analysis of the entire index and the reading of long lists of nodes and their contents, explained Dustin Kirkland, chief technology officer at Gazzang, a security and operations diagnostics company.

This process can consume considerable time and CPU resources, he noted.

Many organizations are thus turning to object storage, which uses the same types of hardware systems as the traditional approach but stores files as objects, which are self-contained groups of logically related data. The information is stored nonhierarchically, with an object identifier and metadata that provides descriptive attributes about the information.

Applications that interface with object-storage systems use identifiers to access objects easily and directly, wherever they are. The objects thus aren't tied to a physical location on a disk or predefined organizational structure. To applications, all of the information appears as one big pool of data.

There is no large central index that users must work through to access data. These indices act as a bottleneck in traditional storage systems, noted Quantum's Lee. Not using indices lets the object-based systems add storage hardware and scale well.

Object systems' identifiers contain more metadata than traditional storage files. According to Amplidata's Leyden, this makes finding data much easier for searchers.

This also lets companies apply detailed policies — such as file-access controls — to objects for more efficient and automated management.

Object storage also simplifies data management and use because administrators don't have to organize and manage hierarchies, according to Cleversafe's Kennedy.

And, he said, the systems are less expensive to set up and operate because they are less complex and highly scalable, and also require fewer administrators.

Object storage — which enables easier, quicker data access than traditional systems — saves money because it can work with slower, less expensive drives without losing performance.

Object-based systems typically secure information via Kerberos, Simple Authentication and Security Layer, or some other Lightweight Directory Access Protocol-based authentication mechanism, Kennedy noted.

Object storage systems' scalability; suitability for use with lower-cost, high-capacity hard drives; and improved automation make the approach good for cloud computing, he said.

Because it is highly scalable and enables easy information access even from large data collections, object storage is best for large unstructured files such as those containing multimedia.

The approach is good for unstructured data also because this type of information doesn't always fit easily into the hierarchical systems that traditional storage houses.

Currently, Leyden said, object storage is used mostly in cloud applications like Dropbox, Amazon's Simple Storage Service, and Google's Picasa photo-storage program. These applications form the basis for cloud-based services such as file sharing, backups, and archiving.

The widespread use of object storage faces several challenges.

Some companies have to rewrite their application interfaces to use object-storage APIs natively, said Quantum's Lee.

The security and privacy of data in object-storage systems is an important issue, said Gazzang's Kirkland.

He explained, "All information should, without question, be encrypted before being written to disk. Object storage without comprehensive encryption should be as unfathomable in 2012 as a minivan without seat belts."

According to Matchett, object-storage use is already spreading, particularly in public and private cloud implementations.

Jeffrey Bolden, Managing Partner at IT consultancy Blue Lotus SIDC, said object storage will remain a niche technology.

He noted that traditional file systems enforce relational integrity — which ensures that relationships between tables remain consistent despite any changes that may be made to information in the database — while object storage doesn't.

Quantum's Stow Lee said object storage will be a niche application at first—primarily for customers needing at least 500 terabytes of storage—but then will be widely adopted as the technology improves and cloud services grow in popularity.

However, she added, no storage technology is best for all uses, so traditional file systems will still be around.

Saturday, May 4, 2013

Nanomedicine: a new frontier

Artist’s conception of membranes forming on a gold ?lm with nanopores. Membranes are delivered as

Artist’s conception of membranes forming on a gold ?lm with nanopores. Membranes are delivered asballoonlike vesicles that break open on contact to form ?at sheets. Green circles are proteins embedded in

the membrane that extend down into the nanopores. Biosensors can detect and characterize the interactions between membrane proteins and molecules such as antibodies.

Everything our bodies do depends on interactions that happen on a nanoscale, the realm of atoms and small molecules. Today, medicine is catching up.

At the University of Minnesota, nanomedicine researchers are pushing forward with projects like new drug-delivery technologies and better screening of potential drugs.

April 29, 2013

Feature

Nanoparticles against cancer

In cancer biology, for example, mechanical engineering professor John Bischof, chemistry associate professor Christy Haynes, and radiology professor Michael Garwood are out to deliver nanoparticles of iron oxide to tumors and kill them with heat while sparing healthy tissue.

“We want to get the temperature of the nanoparticles above 45 degrees C [113 F],” says Bischof, whose expertise is heating measurements.

This is possible by applying alternating magnetic fields around the nanoparticles inside living tissue. The applied fields make the particles’ magnetic fields flip direction quickly, or they roll the particles back and forth, creating friction. These motions heat the particles within tumor cells that contain them, but not normal cells, which don’t.

Ideally, the team would inject enough iron oxide into a tumor to achieve more than 1 milligram of iron per gram of tumor tissue. It is important to know how many of the nanoparticles have been absorbed by a tumor so as not to overtreat.

“Unfortunately, clinical imaging like ultrasound or computed tomography [CT] can’t accurately measure iron concentrations in that range,” Bischof says.

However, at the U’s Center for Magnetic Resonance Research, Garwood has developed SWIFT, a new technology that can. At present, no other imaging technology is capable of this, Bischof says.

To improve stability and heating properties, Haynes applies coats—10-20 nanometers thick—of a special “mesoporous” silica. This silica naturally contains pores, into which molecules of anti-cancer drugs can be added for a one-two punch.

Dartmouth College researcher Jack Hoopes depends on this work as he, along with physicians, move toward bringing iron oxide thermo-therapy to patients. Clinical trials with breast cancer patients are scheduled to begin this year.

Are they toxic?

Nanomedicine also concerns the toxicity of nanoparticles. When inhaled in quantity, they can be harmful. But what if they’re ingested and get into the bloodstream?

Christy Haynes has tested commonly used nanoparticles—made from silica, titanium, gold, and silver—for toxicity to several types of cells from the immune systems of lab animals and humans.

“With the four types of particles, we almost always see 80 percent viability of the cells,” she says. In other words, the cells held up pretty well. Haynes hopes to learn if this result will apply to other cells with similar functions. She also wants to answer big questions like: How long do nanoparticles last in the body? and How are they excreted?

Drug screening

If a drug is to elicit some response from a cell, it first must interact with a protein “receptor” embedded in the cell’s outer membrane. A good candidate drug is one that interacts strongly, but measuring the strength of interactions is inefficient because most tests in use only tell whether or not an interaction occurs.

But that’s about to change.

“We’re developing optical sensors to study how proteins interact with molecules,” says Sang-Hyun Oh, an associate professor of electrical and computer engineering. “Membrane proteins are the main targets.“

Oh and his colleagues have developed a way to fabricate ultrathin gold films containing nanoscale pores in a precise array. In experiments, they lay a membrane containing receptors over a film, with the receptors protruding from the membrane both downward into the pores and upward. Next, they add a candidate drug to be tested.

Under laser light, electrons in the gold atoms resonate and funnel the light down the pores and into a detector. This response is very sensitive to how a drug candidate interacts with a receptor.

Oh and his team created the instrument that measures the interaction strength. They are collaborating with Mayo Clinic neuroscientists who have developed antibodies to potentially treat multiple sclerosis by restoring neurons’ myelin sheaths, which the cells need to function normally.

“With rodents, we found that the antibodies attached to membranes of [myelin-forming cells] and triggered them to initiate repair,” says Oh.

Without Oh’s group, the Mayo Clinic scientists would have to rely on costly, inefficient testing of the antibodies they produce. Clinical trials with MS patients will begin soon.

Tags: College of Science and Engineering, Academic Health Center

____________________________________________________________________________________________________________

TK Recommends

____________________________________________________________________________________________________________

UM News (2013).

Nanomedicine: a new frontier

University of Minessota

____________________________________________________________________________________________________________

IMPORTANT NOTICE

IMPORTANT NOTICEFriday, May 3, 2013

What does the Muse CD cover have to do with Medical Imaging?

Indeed, this image is truly beautiful and remarkable. It is an image of the white matter fibers in the brain obtained with diffusion MRI (link). The image was obtained by the Human Connectome Project, which is a 5-year project funded by NIH to find the networks of the human brain. These networks will show how our brain communicates between different regions and give insight about the anatomical and functional organization of the brain. The project also has the goal to produce data that will help understanding brain diseases such as Alzheimer's disease. The data is available to the scientific community.

So how do you obtain these networks? By applying computer algorithms to data obtained with different neuroimaging techniques: MRI, fMRI, diffusion MRI among others. These computer algorithms come from the graph theory. The application of these algorithms is extremely useful, because the algorithms analyze the network, reduce the complexity, find similarities and differences between different networks.

A very nice science article for researchers not familiar with the topic:

http://www.sciencemag.org/site/products/lst_20130118.xhtml

To know more about obtaining diffusion MRI data or network methods, look into these two articles:

- Hasan, K., Walimuni, I., Abid, H., & Hahn, K. (2011). A review of diffusion tensor magnetic resonance imaging computational methods and software tools Computers in Biology and Medicine, 41 (12), 1062-1072 DOI: 10.1016/j.compbiomed.2010.10.008

- Kaiser, M. (2011). A tutorial in connectome analysis: Topological and spatial features of brain networks NeuroImage, 57 (3), 892-907 DOI: 10.1016/j.neuroimage.2011.05.025

Thursday, May 2, 2013

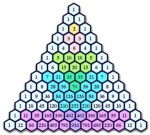

Tartaglia-Pascal triangle and quantum mechanics

The paper I wrote with Alfonso Farina and Matteo Sedehi about the link between the Tartaglia-Pascal triangle and quantum mechanics is now online (see here). This paper contains as a statement my theorem that provides a connection between the square root of a Wiener process and the Schrödinger equation that arose a lot of interest and much criticisms by some mathematicians (see here). So, it is worthwhile to tell how all this come about.

On fall 2011, Alfonso Farina called me as he had an open problem after he and his colleagues got published a paper on Signal, Image and Video Processing, a journal from Springer, where it was shown how the Tartaglia-Pascal triangle is deeply connected with diffusion and the Fourier equation.  The connection comes out from the

The connection comes out from the  binomial coefficients, the elements of the Tartaglia-Pascal triangle, that in some limit give a Gaussian and this Gaussian, in the continuum, is the solution of the Fourier equation of heat diffusion. This entails a deep connection with stochastic processes. Stochastic processes, for most people working in the area of radar and sensors, are essential to understand how these device measure through filtering theory. But, in the historic perspective Farina & al. put their paper, they were not able to get a proper connection for the Schrödinger equation, notwithstanding they recognized there is a deep formal analogy with the Fourier equation. This was the open question: How to connect Tartaglia-Pascal triangle and Schrödinger equation?

binomial coefficients, the elements of the Tartaglia-Pascal triangle, that in some limit give a Gaussian and this Gaussian, in the continuum, is the solution of the Fourier equation of heat diffusion. This entails a deep connection with stochastic processes. Stochastic processes, for most people working in the area of radar and sensors, are essential to understand how these device measure through filtering theory. But, in the historic perspective Farina & al. put their paper, they were not able to get a proper connection for the Schrödinger equation, notwithstanding they recognized there is a deep formal analogy with the Fourier equation. This was the open question: How to connect Tartaglia-Pascal triangle and Schrödinger equation?

People working in quantum physics are aware of the difficulties researchers have met to link stochastic processes a la Wiener and quantum mechanics. Indeed, skepticism is the main feeling of all of us about this matter. So, the question Alfonso put forward to me was not that easy. But Alfonso & al. paper contains also a possible answer: Just start from discrete and then go back to continuum. So, the analog of the heat equation is the Schrödinger equation for a free particle and its kernel and, indeed, the evolution of a Gaussian wave-packet can be managed on the discrete and gives back the binomial coefficient. What you get in this way are the square root of binomial coefficients.  So, the link with the Tartaglia-Pascal triangle is rather subtle in quantum mechanics and enters through a square root, reminiscent of the Dirac’s work and his greatest achievement, Dirac equation. This answered Alfonso’s question and in a way that was somewhat unexpected.

So, the link with the Tartaglia-Pascal triangle is rather subtle in quantum mechanics and enters through a square root, reminiscent of the Dirac’s work and his greatest achievement, Dirac equation. This answered Alfonso’s question and in a way that was somewhat unexpected.

Then, I thought that this connection could be deeper than what we had found. I tried to modify Ito calculus to consider fractional powers of a Wiener process. I posted my paper on arxiv and performed both experimental and numerical computations. All this confirms my theorem that the square root of a Wiener process has as a diffusion equation the Schrödinger equation. You can easily take the square root of a natural noise (I did it) or compute this on your preferred math software. It is just interesting that mathematicians never decided to cope with this and still claim that all this evidence does not exist, basing their claims on a theory that can be easily amended.

We have just thrown a seed in the earth. This is our main work. And we feel sure that very good fruits will come out. Thank you very much Alfonso and Matteo!

Farina, A., Frasca, M., & Sedehi, M. (2013). Solving Schrödinger equation via Tartaglia/Pascal triangle: a possible link between stochastic processing and quantum mechanics Signal, Image and Video Processing DOI: 10.1007/s11760-013-0473-y

Marco Frasca (2012). Quantum mechanics is the square root of a stochastic process arXiv arXiv: 1201.5091v2

Farina, A., Giompapa, S., Graziano, A., Liburdi, A., Ravanelli, M., & Zirilli, F. (2011). Tartaglia-Pascal’s triangle: a historical perspective with applications Signal, Image and Video Processing, 7 (1), 173-188 DOI: 10.1007/s11760-011-0228-6

This entry was posted on Friday, April 26th, 2013 at 11:27 am and is filed under Mathematical Physics, Physics, Quantum mechanics. You can follow any responses to this entry through the RSS 2.0 feed. You can leave a response, or trackback from your own site. Post navigation« Previous Post